Hello readers

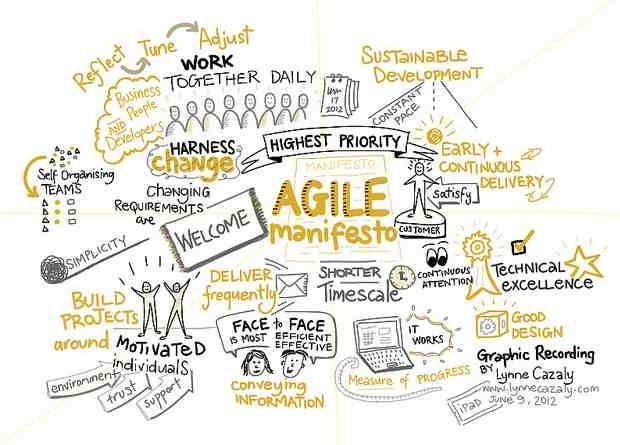

My article on the relationship of Agile Manifesto to the efforts and dilemmas of software testing has been published at stickyminds.com

Here are excerpts from the article – Please visit https://www.stickyminds.com/article/let-agile-manifesto-guide-your-software-testing and share your views too!

The Agile Manifesto is the basis of the agile process framework for software development. It sums up the thought process of the agile mind-set over the traditional waterfall methodology, and it’s the first thing we learn about when we set out to embrace an agile transition.

The Agile Manifesto applies to all things agile: Different frameworks like Scrum, DAD (Disciplined Agile Delivery), SAFe (Scaled Agile Framework), and Crystal all stem from the same principles.

Although its values are commonly associated with agile development, they apply to all people and teams following the agile mind-set, including testers. Let’s examine the four main values of the Agile Manifesto and find out how they can bring agility to teams’ test efforts.

Individuals and Interactions over Processes and Tools

Agile as a development process values the team members and their interactions more than elaborate processes and tools.

This value also applies to testers. Agile testing bases itself in testers’ continuous interaction and communication with the rest of the team throughout the software lifecycle, instead of a one-way flow of information from the developers or business analysts on specific milestones on the project. Agile testers are involved in the requirements, design, and development of the project and have constant interaction with the entire team. They are co-owners of the user stories, and their input helps build quality into the product instead of checking for quality in the end. Tools are used on a necessary basis to help support the cause and the processes.

For example, like most test teams, a team I worked on had a test management system in place, and testers added their test cases to the central repository for each user story. But it was left up to the team when in the sprint they wanted testers to do that. While some teams added and wrote their test scenarios directly on the portal, other teams found it easier to write and consolidate test cases in a shared sheet, get them reviewed, and then add them all to the repository portal all at one go.

While we did have a process and a tool in place to have all test cases in a common repository for each sprint, we relied on the team to decide what the best way for them was to do that. All processes and tools are only used to help make life easier for the agile team, rather than to complicate or over formalize the process.

Working Software over Comprehensive Documentation

With this value, the Agile Manifesto states the importance of having functioning software over exhaustively thorough documents for the project.

Similarly, agile testers embrace the importance of spending more time actually testing the system and finding new ways to exercise it, rather than documenting test cases in a detailed fashion.

Different test teams will use different techniques to achieve a balance between testing and documentation, such as using one-liner scenarios, exploratory testing sessions, risk-based testing, or error checklists instead of test cases to cover testing, while creating and working with “just enough” documentation in the project, be it through requirements, designs, or testing-related documents.

I worked on an agile project for a product where we followed Scrum and worked with user stories. Our approach was to create test scenarios (one-liners with just enough information for execution) based on the specified requirements in the user story. These scenarios were easily understood by all testers, and even by the developers to whom they were sent for review.

Execution of test scenarios was typically done by the same person who wrote them, because we had owners for each user story. Senior testers were free to buddy test or review the user story in order to provide their input for improvements before finalizing the tests and adding them into the common repository.

Customer Collaboration over Contract Negotiation

This is the core value that provides the business outlook for agile. Customer satisfaction supersedes all else. Agile values the customer’s needs and constant communication with them for complete transparency, rather than hiding behind contract clauses, to deliver what is best for them.

Agile testing takes the same value to heart, looking out for the customer’s needs and wishes at all points of delivery. What is delivered in a single user story or in a single sprint to an internal release passes under the scrutiny of a tester acting as the advocate for the customer.

Because there is no detailed document for each requirement, agile testers are bound to question everything based on their perception of what needs to be. They have no contract or document to hide behind if the user is not satisfied at the end of the delivery, so they constantly think with their “user glasses” on.

As an agile tester, when I saw a feature working fine, I would question whether it was placed where a user would find it. Even when the user story had no performance-related criteria, I would debate over whether the page load time of six seconds would be acceptable. After I saw that an application was functionally fine, I still explored and found that the open background task threads were not getting closed, leading to the user’s machine getting hung up after few hours of operation. None of these duties were a part of any specification, but they were all valuable to the user and needed correction.

Responding to Change over Following a Plan

Agile welcomes change, even late in development. The whole purpose of agile is to be flexible and able to incorporate change. So, unlike the traditional software development approaches that are resistant to change, agile has to respond to change and teams should expect to replan their plans.

In turn, such is the case for agile testing. Agile testing faces the burden of continuous regression overload, and topped with frequent changes to requirements, rework may double itself, leading to testing and retesting the same functionalities over and over again.

But agile testing teams are built to accommodate that, and they should have the ability to plan in advance for such situations. They can follow approaches like implementing thorough white-box testing, continuously automating tested features, having acceptance test suites in place, and relying on more API-level tests rather than UI tests, especially in the initial stages of development when the user interface may change a lot.

These techniques lighten the testing team’s burden so that they can save their creative energies to find better user scenarios, defects, and new ways to exercise the system under test.

Let the Agile Manifesto Guide Your Testing

When agile testers have dilemmas and practical problems, they can look to the Agile Manifesto for answers. Keep it in mind when designing and implementing test efforts; the Agile Manifesto’s values will guide you to the best choice for your team and your customers.

If you are interested in learning more about the Basics of Agile Testing, here is an interesting post for you!

Hope you liked my write-up, please share your views too!

Happy Testing!

Nishi