Manual testing is vital in the context of software development lifecycle. Although manual testing has undergone significant change over the years, its relevance continues to be of immense value. The manual testing process involves designing test cases on the basis of software specifications as well as requirements.

The year 2025 will definitely mark a transformative phase when it comes to manual testing. Advanced technologies may play a key role in the year. These elements can magnify the importance of manual QA testing services.

Some of the major trends that have been covered in the article are Test Augmentation with AI and ML, Exploratory Testing, Seamless Integration with DevOps, Shift Towards Quality Engineering Mindset, No-Code and Low-Code Testing Platforms, Collaborative Testing with Cross-Functional Teams and Remote and Crowdsourced Testing.

The Current State of Testing

To understand the current state of manual testing, you must understand its role in the Agile Era. Additionally, the current concerns of manual testers cannot be ignored.

Importance of Software Testing in the Agile Era

In the Agile Era, the relevance and importance of manual testing have certainly surged. Without manual testing, it is impossible to think of different aspects of the software development lifecycle, such as usability, exploratory testing, and human-centric design. A trusted manual testing service provider can ensure that holistic testing is conducted to develop well-functional software.

Both manual and automated testing are critical while developing new software. Manual testing can support software quality by leveraging manual skills. Similarly, automated testing is vital to executing diverse test cases by leveraging technology.

Challenges Faced by Manual Testers Today

At present, Manual Testers face a host of challenges and issues. The common issues that arise include:

- Manual testing involves considerable time and resources. Thus, the time- and resource-intensive nature of the process can be quite formidable for manual testers.

- At present, there is a rise in the demand for faster releases. For instance, the popularity of the CI/CD pipeline, which is an automated framework, adds pressure for manual testers. They have to compete with automation which might not be feasible.

- Scaling manual testing efforts is a daunting task. Hence, the efforts and hard work of manual testers may not be easily recognized.

Top Trends Shaping Manual Testing in 2025

Test Augmentation with AI and ML

Technologies such as AI and ML are certainly revolutionizing the manual testing landscape. Manual testers can use AI-powered tools to perform tests. These tools can certainly assist them with diverse functionalities, including test case design, defect prediction, and test data generation. Some of the main benefits of leveraging advanced technologies are faster and more accurate test planning processes.

Emphasis on Exploratory Testing

In 2025, exploratory testing will undoubtedly take center stage. This is because it can help testers integrate creativity into manual testing to locate bugs. Manual testers who offer manual testing services can certainly improve their exploratory testing skills by understanding the needs of users at a holistic level.

Seamless Integration with DevOps

A top trend that will certainly shape manual testing involves the seamless Integration with DevOps. By fusing the manual testing process with DevOps pipelines and CI/CD workflows, the process can be made more efficient and effective. Furthermore, the trends relating to the application of lightweight tools can support manual testing within agile sprints.

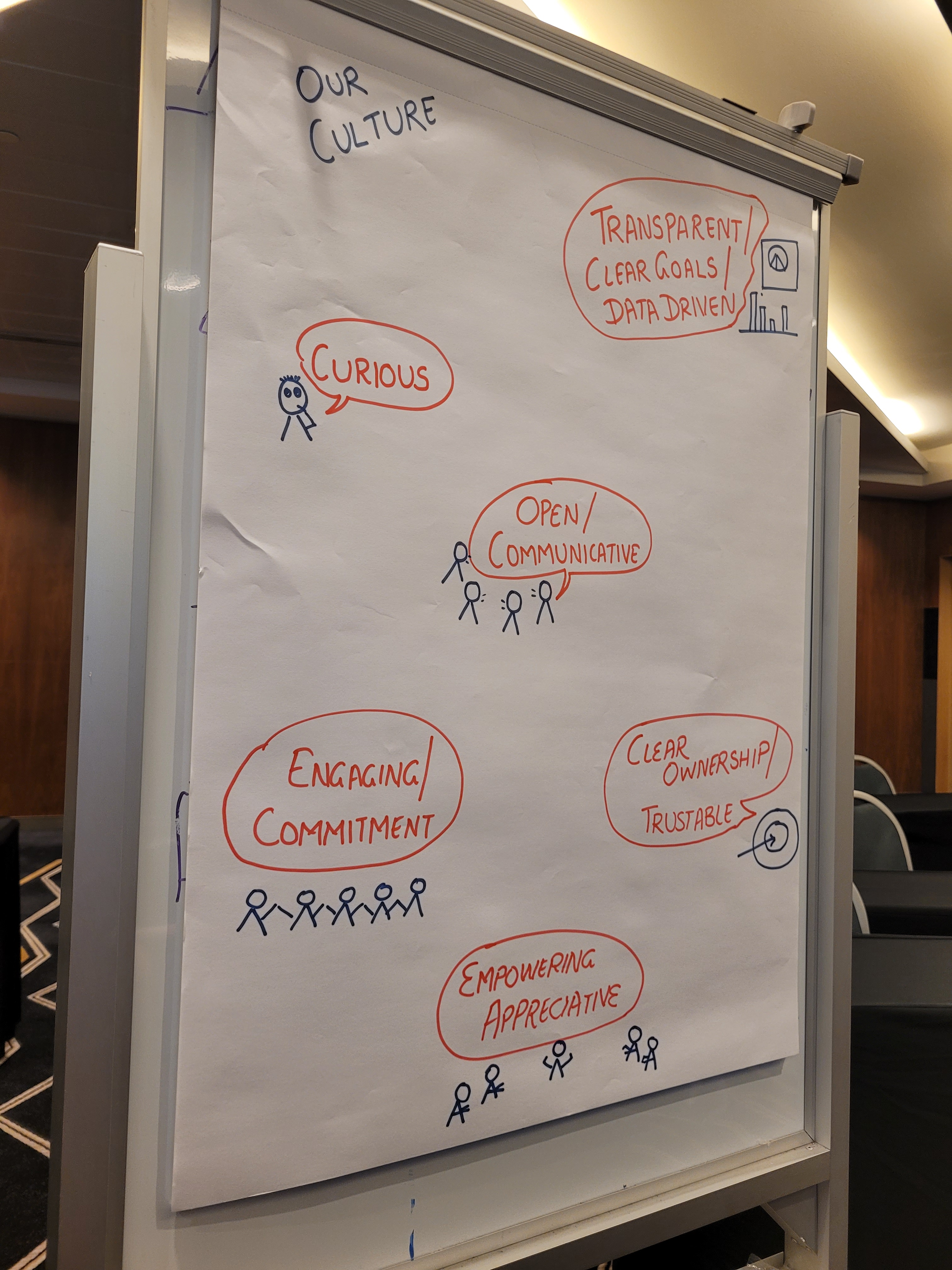

Shift Towards Quality Engineering Mindset

In the near future, there will be a shift towards a quality engineering mindset. Thus, the role of manual testers may change. These testers will definitely become quality advocates within teams. That’s not all! Their role will not be restricted to testing. They will look beyond testing the functionality of the software application and focus on areas such as user experience, performance, and accessibility.

No-Code and Low-Code Testing Platforms

The year 2025 is Lilley to witness a surge in no-code/low-code tools. Such tools can definitely enable ng non-technical testers to execute complex tests efficiently. Using these tools may have numerous implications in relation to manual testing workflows. These workflows are likely to become more streamlined and simpler.

Collaborative Testing with Cross-Functional Teams

Manual testing may undergo change due to the rise in collaboration. Greater collaboration among diverse stakeholders, including developers, testers, designers, and product owners, can strengthen the process. Furthermore, several tools and practices that support seamless teamwork and collaboration, such as Jira, may gain high popularity.

Remote and Crowdsourced Testing

The year 2025 is most likely to witness the rise of distributed teams as well as crowdsourced testing platforms. Remote work can pave a new path for manual testing. Furthermore, manual testers from diverse areas may engage in testing, thereby popularizing the concept of crowdsourced testing. Some of the main advantages of the specific trend include the consideration of diverse perspectives, better flexibility, and an increase in cost-effectiveness.

Conclusion

The manual testing landscape continues to evolve currently. Several trends relating to manual testing have been identified that can reinvent the manual testing process in 2025.

You need to understand that the manual testing process continues to be highly relevant and important. It continues to play a catalytic role in the software development lifecycle process. Testers from the top manual QA testing services company need to embrace these trends so that they can adapt and conduct high-quality manual testing.

This is a guest post by: Harshil Malvi

Author Bio:

Harshil Malvi, Founder & CEO of TabdeltaQA, is an expert in software testing. He leads the company with a focus on delivering high-quality testing services that help businesses create smooth and reliable digital experiences. With skills in automation testing, performance testing, and quality assurance, Harshil is dedicated to making sure software works perfectly and meets the needs of users.