With the software revolution, all excellent project managers know that if you hasten into the testing process, that particular project is considered to face a lot of issues.

Working with the best test management software and its particular strategy planned before testing starts is essential because software becomes more complicated with more sequences of code to evaluate.

Without a definite outline to develop, the QA team might not be sure what their duties are, what type of testing should be performed first and how the project’s progress is being determined.

The initial action to developing a test plan is to understand what you are attempting to perform with the testing strategy document as it will change software testing strategies.

According to this post created by TatvaSoft experts, the purpose of a test plan is to describe the complete scope of the QA method from both a comprehensive and granular standard.

Let’s take a look at one of their sections and understand why they consider that having a Test Plan for every business is beneficial—

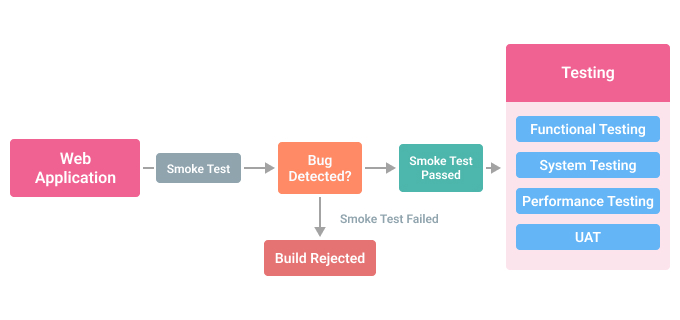

A Test Plan is a thorough document that outlines the test strategy, objectives, timeline, estimation, deliverables, and resources needed to execute software testing. The Test Plan assists us in determining the amount of work required to confirm the quality of the application being tested. The test plan is a blueprint for conducting software testing operations as a well-defined procedure that is meticulously documented. Read More

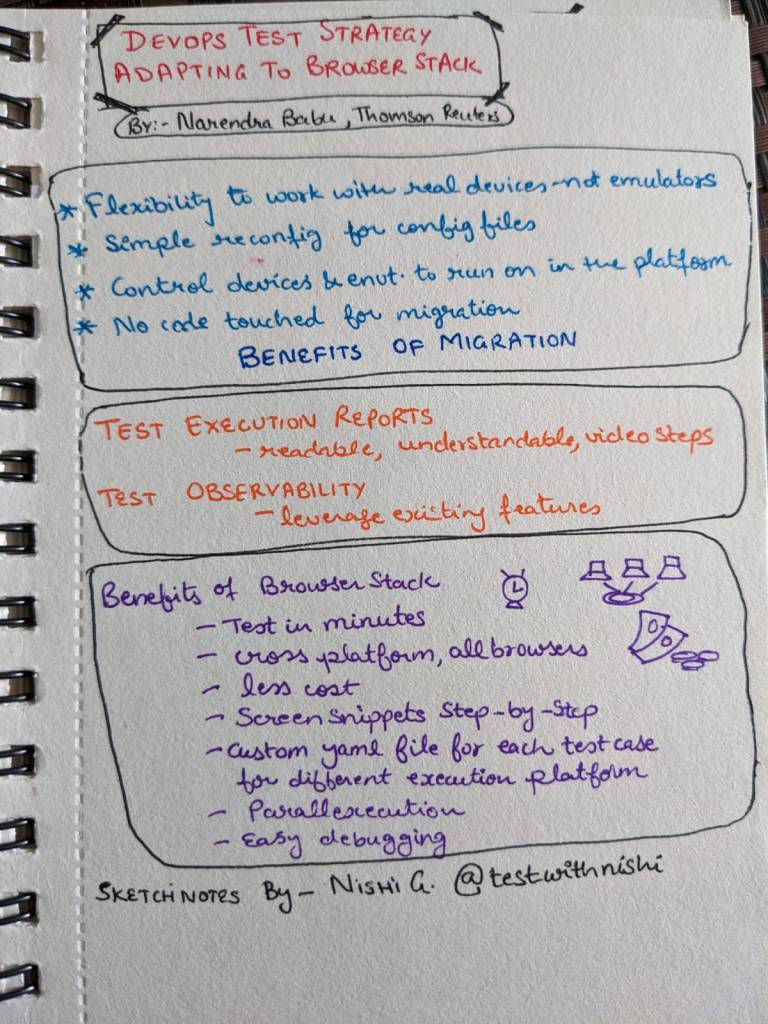

The software testing approach helps to create a test plan as it is one of the most essential aspects of the complete testing process, as it describes accurately what the testing team requires to succeed and how to proceed to achieve those aims. Test Strategy is a crucial part of the Test Plan.

The task of writing a whole document test strategy from start may seem daunting, especially for bigger projects. If QA managers need a systematic approach to this responsibility, however, they’ll discover that planning and compiling an efficient and comprehensive test strategy isn’t so hard.

Here are a few tips for your team’s benefit-

Steps To Create A Test Plan Using Best Test Strategies!

Understand the product or feature you’re testing

We will begin with one of the essential aspects of software testing strategy, which is having a broad knowledge of the product or feature before you begin developing a test plan for it.

For instance, let’s assume you’ve just worked over a website redesign and need to test it before you launch it in the market. For that, you will require to know these below-mentioned factors:

- Discuss with the designer and developer to know the extent, goals, and functionality of the website.

- Review the project documentation which is developed by the project manager that includes SOW, project plan, and the responsibilities in the project management tool.

- Conduct a product examination to know the functionality, user movement, and defects.

This action provides you with the setting to write a good test strategy document, purposes and begin to plan out the sources you’ll require to create it.

Define the test objectives and their criteria

As you describe every other test you’re continuing to work on, you are required to apprehend when your test is “finished.”

This implies establishing the pass and fail standards for each particular test, and some of the information we can discuss, such as departure and delay criteria.

To perform this, you’ll need to recognise different system metrics that you’re monitoring and select what progress means for them.

For instance, if you were working on a performance test you need these measures to succeed:

- Response time: Complete time to forward a request and receive a response.

- Average load time: Average time it needs to address all requests.

- Peak response time: The most extended time it needs to complete a request.

- Wait time: It is the duration it needs to get the first byte after a demand is sent.

- Requests per second: The number of requests it can manage during that one second.

- Events passed/failed: The whole number of passed or failed requests.

Don’t worry you can proceed with testing and repeating forever. So you are required to determine what’s best for your software out and in the palms of users.

Plan the test environment

The outcomes of your test plan always depend on the point you’re testing as well as the environment you’re testing it in.

As a component of the test strategy document scope, you are required to decide what hardware, software, operating system, and tool compounds you’re running to test.

This is a condition where it pays to be particular. For instance, if you’re going to define an operating system to be utilised throughout the test plan, specify the OS edition/version rather than just mentioning the name.

Define Test Objective

Test Objective is the complete purpose and performance of the test execution. The purpose of the testing is to discover as many software errors as possible; guarantee that the software during the test is bug-free before release.

To determine the test purposes, you need to perform these 2 steps:

- Record all the software features such as functionality, appearance, and GUI that may require testing.

- Determine the purpose or the object of the test according to features

Use these steps to get the test goal of your testing project

Also, you can use the ‘TOP-DOWN’ approach to discover the website’s features that may require testing. In this process, you break down the app following the test to component and sub-component.

Conclusion

The test strategy document provides a definite concept of what the test team will perform for the entire project. It is a latent document that means it won’t fluctuate during the project life cycle.

The one who prepares this document must have enough expertise in the product field, as this is the record that is running to manage the complete team, and it won’t fluctuate during the project.

Test strategy documents need to be distributed to the complete testing team before the testing ventures start.

<This is a guest post by Matthew Jones – who is a tech enthusiast. and he likes to share his bylines.>